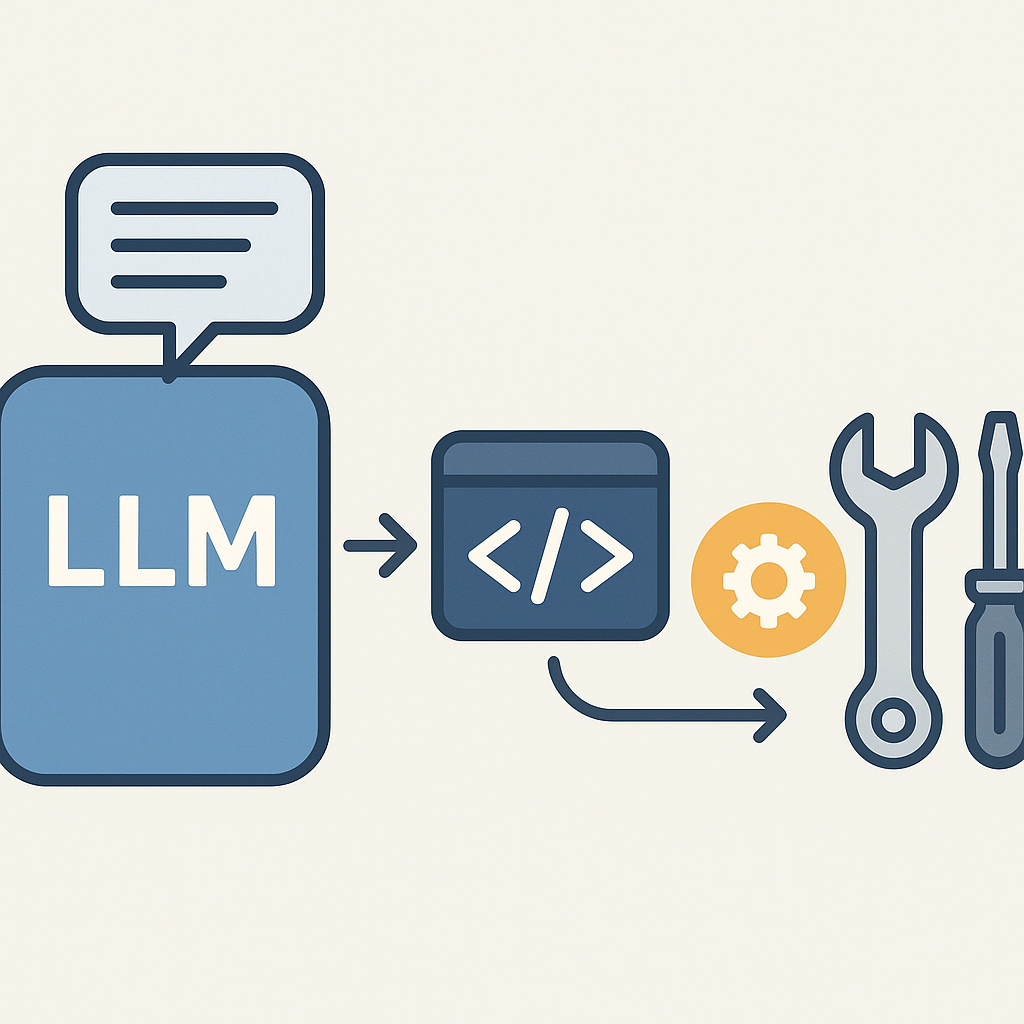

Designing scalable LLM tool calling architectures requires balancing orchestration complexity with system resilience, but choosing between synchronous and event-driven patterns significantly impacts latency and throughput. Backend engineers must weigh retry strategies and error boundaries against rate limiting constraints to maintain robust service levels. However, implementing fallback designs and asynchronous workflows often dictates the architecture's ability to gracefully handle failures and scale effectively.

See also: ai tool integration strategies, practical automations and safety, gemini 3 upgrades and security

Overview

Designing scalable LLM tool calling architectures requires balancing synchronous and event-driven orchestration to optimize latency and throughput. Backend engineers must implement robust retry strategies, rate limiting, and error boundaries to maintain resilience under load. Asynchronous workflows enable decoupling of tool invocation from LLM processing, improving scalability and fault tolerance. Hybrid architectures that combine synchronous calls for critical paths with asynchronous event queues for non-blocking tasks offer operational flexibility. Effective monitoring and fallback mechanisms are essential to detect failures early and gracefully degrade functionality, ensuring reliable AI integration in SaaS backends.

Key takeaways

- Synchronous orchestration offers simplicity but can increase LLM latency and reduce throughput under load.- Event-driven async…

- decide the success metric first (CTR/impressions) before scaling batches

- publish with canonical + sitemap updates to reduce indexing drift

Decision Guide

- Choose synchronous orchestration when low latency and immediate results are critical

- Opt for asynchronous event-driven workflows if throughput and fault isolation are priorities

- Use circuit breakers when tool reliability is variable or unknown

- Implement retries with backoff only for transient errors, avoid on permanent failures

- Adopt hybrid models to optimize for mixed workload patterns and SLA tiers

- Avoid tight coupling between LLM and tool APIs to facilitate independent scaling

- Prioritize monitoring integration early to enable data-driven operational decisions

Overusing synchronous calls can bottleneck your system under load, but excessive async decoupling may increase complexity and response times—balance based on SLA requirements.

Step-by-step

Analyze LLM tool calling architecture focusing on orchestration layers and retry strategies for resilience.

Compare synchronous vs event

driven orchestration workflows impacting latency and throughput metrics.

Implement rate limiting and error boundaries to enhance system stability and fallback design.

Design hybrid architectures combining sync and async tool calls to optimize batch processing and responsiveness.

Automate tool discovery and invocation to streamline pipeline execution and reduce manual intervention.

Monitor production tool calling with observability dashboards tracking retries, errors, and performance.

Evaluate fallback strategies and retry metrics to improve overall system reliability and user experience.

Common mistakes

Indexing

Over-reliance on synchronous orchestration can cause search engines to misinterpret dynamic content timing, hurting indexing.

Pipeline

Lack of robust retry and error boundary patterns in async workflows leads to pipeline failures and inconsistent tool invocation.

Measurement

Misinterpreting CTR drops as failures without correlating with impression data can mislead tool calling performance analysis.

Indexing

Ignoring canonical URLs when integrating multiple tool calling strategies risks duplicate content and deindexing.

Pipeline

Not implementing rate limiting and fallback design in orchestration layers causes system overload and pipeline bottlenecks.

Measurement

Using raw click counts without segmenting by user intent or session context skews GA4 metrics for tool calling features.

Conclusion

This architecture works well when backend teams balance orchestration complexity with operational resilience, especially under variable load and tool reliability. It may fail in environments demanding ultra-low latency or when tooling ecosystems rapidly change without automated discovery and monitoring.